This Kalman Filter Example post is the first in a series where we deploy the Kalman Filter in pairs trading. Be sure to follow our progress in Part 2: Pairs Trading in Zorro, and Part 3: Putting It All Together.

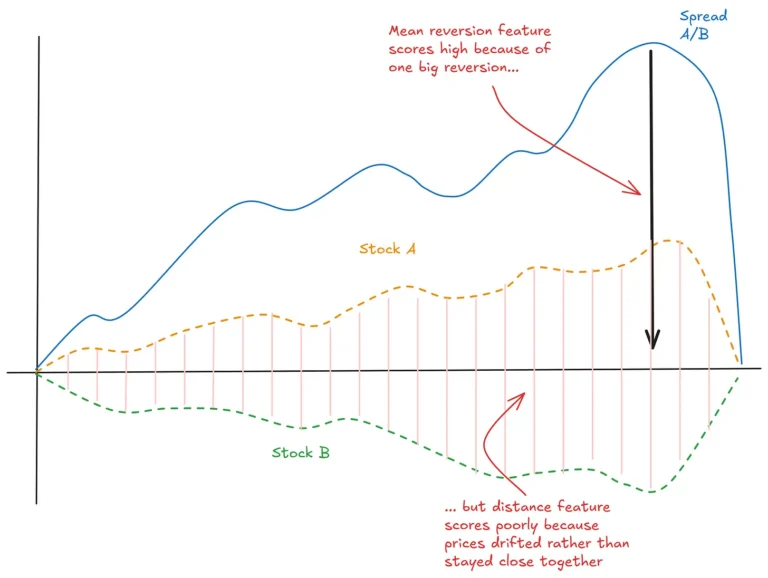

Anyone who’s tried pairs trading will tell you that real financial series don’t exhibit truly stable, cointegrating relationships.

If they did, pairs trading would be the easiest game in town. But the reality is that relationships are constantly evolving and changing. At some point, we’re forced to make uncertain decisions about how best to capture those changes.

One way to incorporate both uncertainty and dynamism in our decisions is to use the Kalman filter for parameter estimation.

The Kalman filter is a state space model for estimating an unknown (‘hidden’) variable using observations of related variables and models of those relationships. The Kalman filter is underpinned by Bayesian probability theory and enables an estimate of the hidden variable in the presence of noise.

There are plenty of tutorials online that describe the mathematics of the Kalman filter, so I won’t repeat those here (

this article is a wonderful read). Instead, this Kalman Filter Example post will show you how to implement the Kalman filter framework to provide a

dynamic estimate of the hedge ratio in a pairs trading strategy. I’ll provide just enough math as is necessary to follow the implementation.

For this Kalman Filter example, we need four variables:

- A vector of our observed variable

- A vector of our hidden variable

- A state transition model (which describes how the hidden variable evolves from one state to the next)

- An observation model (a matrix of coefficients for the other variable – we use a hedge coefficient and an intercept)

For our hedge ratio/pairs trading application, the observed variable is one of our price series (p_1) and the hidden variable is our hedge ratio, (\beta). The observed and hidden variables are related by the familiar spread equation: [p_1 = \beta * p_2 + \epsilon] where (\epsilon) is noise (in our pairs trading framework, we are essentially making bets on the mean reversion of (\epsilon)). In the Kalman framework, the other price series, (p_2) provides our observation model.

We also need to define a state transition model that describes the evolution of (\beta) from one time period to the next. If we assume that (\beta) follows a random walk, then our state transition model is simply [\beta_t = \beta_{t-1} + \omega]

Here’s the well-known iterative Kalman filter algorithm.

For every time step:

- Predict the next state of the hidden variable given the current state and the state transition model

- Update the state covariance prediction

- Predict the next value of the observed variable given the prediction for the hidden variable and the observation model

- Update the measured covariance prediction

- Calculate the error between the observed and predicted values of the observed variable

- Calculate the Kalman gain

- Update the estimate of the hidden variable

- Update the state covariance prediction

To start the iteration, we need initial values for the covariances of the measurement and state equations. Methods exist to estimate these from data, but for our purposes we will start with some values that result in a relatively slowly changing hedge ratio. To make the hedge ratio change faster, increase the values of

delta and

Ve in the R code below. The initial estimates of these values are as close to ‘parameters’ that we have in our Kalman filter framework.

Here’s some R code for implementing the Kalman filter.

The two price series used are daily adjusted closing prices for the “Hello world” of pairs trading: GLD and GDX (you can download the data at the end of this post).

First, read in and take a look at the data:

library(xts)

path <- "C:/Path/To/Your/Data/"

assets <- c("GLD", "GDX")

df1 <- xts(read.zoo(paste0(path, assets[1], ".csv"), tz="EST", format="%Y-%m-%d", sep=",", header=TRUE))

df2 <- xts(read.zoo(paste0(path, assets[2], ".csv"), tz="EST", format="%Y-%m-%d", sep=",", header=TRUE))

xy <- merge(df1$Close, df2$Close, join="inner")

colnames(xy) <- assets

plot(xy, legend.loc=1)

Here’s what the data look like:

Looks OK at first glance.

Here’s the code for the iterative Kalman filter estimate of the hedge ratio:

x <- xy[, assets[1]]

y <- xy[, assets[2]]

x$int <- rep(1, nrow(x))

delta <- 0.0001

Vw <- delta/(1-delta)*diag(2)

Ve <- 0.001

R <- matrix(rep(0, 4), nrow=2)

P <- matrix(rep(0, 4), nrow=2)

beta <- matrix(rep(0, nrow(y)*2), ncol=2)

y_est <- rep(0, nrow(y))

e <- rep(0, nrow(y))

Q <- rep(0, nrow(y))

for(i in 1:nrow(y)) {

if(i > 1) {

beta[i, ] <- beta[i-1, ] # state transition

R <- P + Vw # state cov prediction

}

y_est[i] <- x[i, ] %*% beta[i, ] # measurement prediction

Q[i] <- x[i, ] %*% R %*% t(x[i, ]) + Ve # measurement variance prediction

# error between observation of y and prediction

e[i] <- y[i] - y_est[i]

K <- R %*% t(x[i, ]) / Q[i] # Kalman gain

# state update

beta[i, ] <- beta[i, ] + K * e[i]

P = R - K %*% x[i, ] %*% R

}

beta <- xts(beta, order.by=index(xy))

plot(beta[2:nrow(beta), 1], type='l', main = 'Kalman updated hedge ratio')

plot(beta[2:nrow(beta), 2], type='l', main = 'Kalman updated intercept')

And here is the resulting plot of the dynamic hedge ratio:

The value of this particular Kalman filter example is immediately apparent – you can see how drastically the hedge ratio changed over the years.

We could use that hedge ratio to construct our signals for a trading strategy, but we can actually use the other by-products of the Kalman filter framework to generate them directly

(hat tip to Ernie Chan for this one):

The prediction error (

e in the code above) is equivalent to the deviation of the spread from its predicted value. Some simple trade logic could be to buy and sell our spread when this deviation is very negative and positive respectively.

We can relate the actual entry levels to the standard deviation of the prediction error. The Kalman routine also computes the standard deviation of the error term for us: it is simply the square root of

Q in the code above.

Here’s a plot of the trading signals at one standard deviation of the prediction error (we need to drop a few leading values as the Kalman filter takes a few steps to warm up):

# plot trade signals

e <- xts(e, order.by=index(xy))

sqrtQ <- xts(sqrt(Q), order.by=index(xy))

signals <- merge(e, sqrtQ, -sqrtQ)

colnames(signals) <- c("e", "sqrtQ", "negsqrtQ")

plot(signals[3:length(index(signals))], ylab='e', main = 'Trade signals at one-standard deviation', col=c('blue', 'black', 'black'), lwd=c(1,2,2))

Cool! Looks OK, except the number of signals greatly diminishes in the latter half of the simulation period. Later, we might come back and investigate a more aggressive signal, but let’s press on for now.

At this point, we’ve got a time series of trade signals corresponding to the error term being greater than one standard deviation from its (estimated) mean. We could run a vectorised backtest by calculating positions corresponding to these signals, then determine the returns of holding those positions.

In fact, let’s do that next:

# vectorised backtest

sig <- ifelse((signals[1:length(index(signals))]$e > signals[1:length(index(signals))]$sqrtQ) & (lag.xts(signals$e, 1) < lag.xts(signals$sqrtQ, 1)), -1,

ifelse((signals[1:length(index(signals))]$e < signals[1:length(index(signals))]$negsqrtQ) & (lag.xts(signals$e, 1) > lag.xts(signals$negsqrtQ, 1)), 1, 0))

colnames(sig) <- "sig"

## trick for getting only the first signals

sig[sig == 0] <- NA

sig <- na.locf(sig)

sig <- diff(sig)/2

plot(sig)

## simulate positions and pnl

sim <- merge(lag.xts(sig,1), beta[, 1], x[, 1], y)

colnames(sim) <- c("sig", "hedge", assets[1], assets[2])

sim$posX <- sim$sig * -1000 * sim$hedge

sim$posY <- sim$sig * 1000

sim$posX[sim$posX == 0] <- NA

sim$posX <- na.locf(sim$posX)

sim$posY[sim$posY == 0] <- NA

sim$posY <- na.locf(sim$posY)

pnlX <- sim$posX * diff(sim[, assets[1]])

pnlY <- sim$posY * diff(sim[, assets[2]])

pnl <- pnlX + pnlY

plot(cumsum(na.omit(pnl)), main="Cumulative PnL, $")

Just a quick explanation of my hacky backtest…

The ugly nested ifelse statement in line 2 creates a time series of trade signals where sells are represented as -1, buys as 1 and no signal as 0. The buy signal is the prediction error crossing under its -1 standard deviation from above; the sell signal is the prediction error crossing over its 1 standard deviation from below.

The problem with this signal vector is that we can get consecutive sell signals and consecutive buy signals. We don’t want to muddy the waters by holding more than one position at a time, so we use a little trick in lines 7 – 10 to firstly replace any zeroes with NA, and then use the na.locf function to fill forward the NAvalues with the last real value. We then recover the original (non-consecutive) signals by taking the diffand dividing by 2.

If that seems odd, just write down on a piece of paper a few signals of -1, 1 and 0 in a column and perform on them the operations described. You’ll quickly see how this works.

Then, we calculate our positions in each asset according to our spread and signals, taking care to lag our signals so that we don’t introduce look-ahead bias. We’re trading 1,000 units of our spread per trade. Our estimated profit and loss is just the sum of the price differences multiplied by the positions in each asset.

Here’s the result:

Looks interesting!

But recall that our trading signals were few and far between in the latter half of the simulation? If we plot the signals, we see that we were actually holding the spread for well over a year at a time:

I doubt we’d want to trade the spread this way, so let’s make our signals more aggressive:

# more aggressive trade signals

signals <- merge(e, .5*sqrtQ, -.5*sqrtQ)

colnames(signals) <- c("e", "sqrtQ", "negsqrtQ")

plot(signals[3:length(index(signals))], ylab='e', main = 'Trade signals at one-standard deviation', col=c('blue', 'black', 'black'), lwd=c(1,2,2))

Better! A smarter way to do this would probably be to adapt the trade level (or levels) to the recent volatility of the spread – I’ll leave that as an exercise for you.

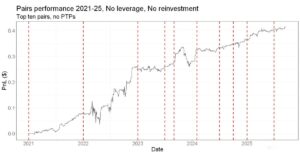

These trade signals lead to this impressive and highly dubious equity curve:

Why is it dubious?

Well, you probably noticed that there are some pretty out-there assumptions in this backtest. To name the most obvious:

- We’re trading at the daily closing price with no market impact or slippage

- We’re trading for free

My gut feeling is that this would need a fair bit of work to cover costs of trading – but that gets tricky to assess without a more accurate simulation tool.

Where to next?

You can see that it’s a bit of a pain to backtest – particularly if you want to incorporate costs. To be fair, there are native R backtesting solutions that are more comprehensive than my quick-n-dirty vectorised version. But in my experience none of them lets you move quite as fast as the

Zorro platform, which also allows you to go from backtest to live trading with almost the click of a button.

You can see that R makes it quite easy to incorporate an advanced algorithm

(well, at least I think it’s advanced; our clever readers probably disagree). But tinkering with the strategy itself – for instance, incorporating costs, trading at multiple standard deviation levels, using a timed exit, or incorporating other trade filters – is a recipe for a headache, not to mention a whole world of unit testing and bug fixing.

On the other hand, Zorro makes tinkering with the trading aspects of the strategy easy. Want to get a good read on costs? That’s literally a line of code. Want to filter some trades based on volatility? Yeah, you might need two lines for that. What about trading the spread at say half a dozen levels and entering and exiting both on the way up and on the way down? OK, you might need four lines for that.

The downside with Zorro is that it would be pretty nightmarish implementing a Kalman filter in its native Lite-C code. But thanks to Zorro’s R bridge, I can use the R code for the Kalman filter example that I’ve already written, with literally only a couple of minor tweaks. We can have the best of both worlds.

Which leads to my next post…

In

Kalman Filter Example part 2, I’ll show you a basic pairs trading script in Zorro, using a more vanilla method of calculating the hedge ratio. After that, I’ll show you

how to configure Zorro to talk to R and thus make use of the Kalman filter algorithm.

I’d love to know if this series is interesting for you, and what else you’d like to read about on Robot Wealth. Let us know in the comments.

Looks OK at first glance.

Here’s the code for the iterative Kalman filter estimate of the hedge ratio:

Looks OK at first glance.

Here’s the code for the iterative Kalman filter estimate of the hedge ratio:

The value of this particular Kalman filter example is immediately apparent – you can see how drastically the hedge ratio changed over the years.

We could use that hedge ratio to construct our signals for a trading strategy, but we can actually use the other by-products of the Kalman filter framework to generate them directly (hat tip to Ernie Chan for this one):

The prediction error (e in the code above) is equivalent to the deviation of the spread from its predicted value. Some simple trade logic could be to buy and sell our spread when this deviation is very negative and positive respectively.

We can relate the actual entry levels to the standard deviation of the prediction error. The Kalman routine also computes the standard deviation of the error term for us: it is simply the square root of Q in the code above.

Here’s a plot of the trading signals at one standard deviation of the prediction error (we need to drop a few leading values as the Kalman filter takes a few steps to warm up):

The value of this particular Kalman filter example is immediately apparent – you can see how drastically the hedge ratio changed over the years.

We could use that hedge ratio to construct our signals for a trading strategy, but we can actually use the other by-products of the Kalman filter framework to generate them directly (hat tip to Ernie Chan for this one):

The prediction error (e in the code above) is equivalent to the deviation of the spread from its predicted value. Some simple trade logic could be to buy and sell our spread when this deviation is very negative and positive respectively.

We can relate the actual entry levels to the standard deviation of the prediction error. The Kalman routine also computes the standard deviation of the error term for us: it is simply the square root of Q in the code above.

Here’s a plot of the trading signals at one standard deviation of the prediction error (we need to drop a few leading values as the Kalman filter takes a few steps to warm up):

Cool! Looks OK, except the number of signals greatly diminishes in the latter half of the simulation period. Later, we might come back and investigate a more aggressive signal, but let’s press on for now.

At this point, we’ve got a time series of trade signals corresponding to the error term being greater than one standard deviation from its (estimated) mean. We could run a vectorised backtest by calculating positions corresponding to these signals, then determine the returns of holding those positions.

In fact, let’s do that next:

Cool! Looks OK, except the number of signals greatly diminishes in the latter half of the simulation period. Later, we might come back and investigate a more aggressive signal, but let’s press on for now.

At this point, we’ve got a time series of trade signals corresponding to the error term being greater than one standard deviation from its (estimated) mean. We could run a vectorised backtest by calculating positions corresponding to these signals, then determine the returns of holding those positions.

In fact, let’s do that next:

Looks interesting!

But recall that our trading signals were few and far between in the latter half of the simulation? If we plot the signals, we see that we were actually holding the spread for well over a year at a time:

Looks interesting!

But recall that our trading signals were few and far between in the latter half of the simulation? If we plot the signals, we see that we were actually holding the spread for well over a year at a time:

I doubt we’d want to trade the spread this way, so let’s make our signals more aggressive:

I doubt we’d want to trade the spread this way, so let’s make our signals more aggressive:

Better! A smarter way to do this would probably be to adapt the trade level (or levels) to the recent volatility of the spread – I’ll leave that as an exercise for you.

These trade signals lead to this impressive and highly dubious equity curve:

Better! A smarter way to do this would probably be to adapt the trade level (or levels) to the recent volatility of the spread – I’ll leave that as an exercise for you.

These trade signals lead to this impressive and highly dubious equity curve:

Great piece

Thanks! Glad you liked it.

Are your assets x and y returns, or prices? It looks like prices, but might it not be more correct to use returns? Also, is there a name for Vw and Ve? Is it possible to relate them to anything in the tutorial you linked? Very interesting post, thank you for putting it together.

x and y are prices. The decision to use prices or returns is not so much about which one is “more correct” – rarely is something so black and white in the trading world as to deserve the label of being correct – but there are certainly implications for how you’d trade the spread in each case.

Ve is the variance of the residuals of the measurement equation. Vw is the covariance in the state transition model. We can’t observe these directly so we need to estimate them. You can “tweak” these estimates (the latter by tweaking the delta parameter) to make the filter more or less responsive. More details here: http://www2.econ.iastate.edu/tesfatsi/FLSTemporalDataMining.GMontana2009.pdf

There are also approaches to estimate these quantities directly from data. No doubt there’s an R package out there that does this for you. You can also use a training and validation set to estimate these values and see if they hold up out of sample.

Hope that helps.

I really like this post. how do you interpret the beta coefficient since here our beta has two different component?

Hi Sarah. Thanks for reading! The two components of

betahere are the slope and intercept terms from the linear regression. We use the slope component as an estimate of the dynamic hedge ratio. We don’t directly use the intercept term in the trading model, but rather than setting it to zero, including it in the regression is helpful for dealing with bias in the residuals. This is a useful article for demonstrating the intuition.Hey Kris,

This is a fantastic article. I’ve read both the Fabian post you’ve linked and your article a few times over. In my Kalman filter implementations (and in my standard OLS regression hedges), I have found the intercept to be very confusing. I’m often returning high intercepts with relatively low beta coefficients resulting in highly imbalanced hedge ratios. If my intercept is so high as significantly distort my hedge, should I add it back into the hedge and re-calculate or am I missing something? Is it every appropriate to include the intercept in the hedge? In an OLS regression, is it appropriate to force the intercept to zero for hedging purposes? In Kalman filtering this seems less practical as the model then requires different implementation.

As a real world example, I am currently looking at asset X valued $0.76235 and asset Y values at $0.04545. My Kalman filter quickly begins producing nearly perfect y-hat values, but results in beta of 0.0219 and intercept of 0.02874. With such a high intercept, my hedge [X * beta] is $0.016705. Clearly far lower than the Y value / y-hat value. Is the hedge result of ~$0.016 the appropriate hedge or do I need to re-add the intercept to produce a hedge at essentially 1:1 with my Y values?

I may be misunderstanding the use of the intercept, but I’m finding very little explanation on the concept.

that was really interesting thank you. Not an expert in the field but could you imagine a future post implementing an R particle filtering package which is supposed to be free from KF’s certain normality assumptions?

Thanks for reading, Nicolas. Sure, I can imagine doing that at some point – would be interesting to see if and how the hedge ratio evolved differently. Next time though, I think I’ll just use one of the existing state space modeling packages rather than doing it from scratch!

Fantastic work!