To Trend or Not To Trend? (Wrong question)

Someone asked me recently whether strategies based on mean reversion, trend following, and momentum are “good” or just data mining.

Someone asked me recently whether strategies based on mean reversion, trend following, and momentum are “good” or just data mining.

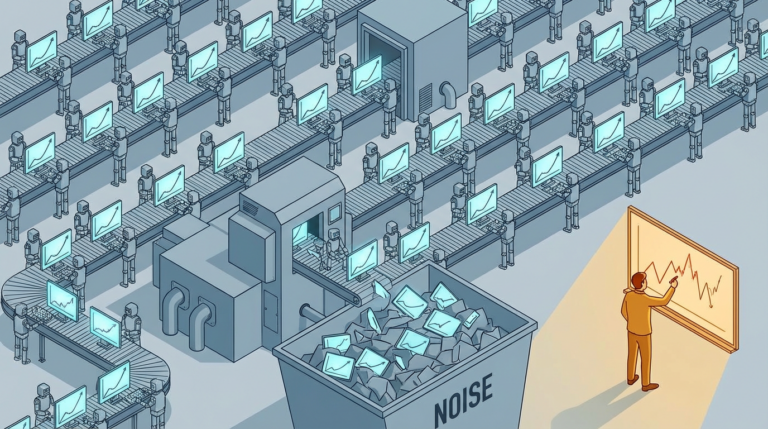

My last two articles on AI and trading research got more engagement than almost anything I’ve written. “More of the

(Most of Them Will Lose Money) AI makes it easier than ever to build trading strategies. Prompt a model, run

This week I discovered the “vibe quant” movement (or rather, it discovered me). People using LLMs to find trading strategies,

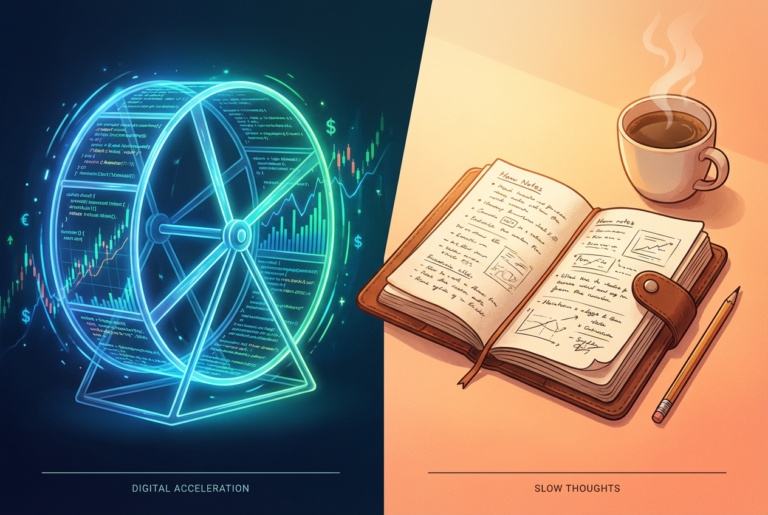

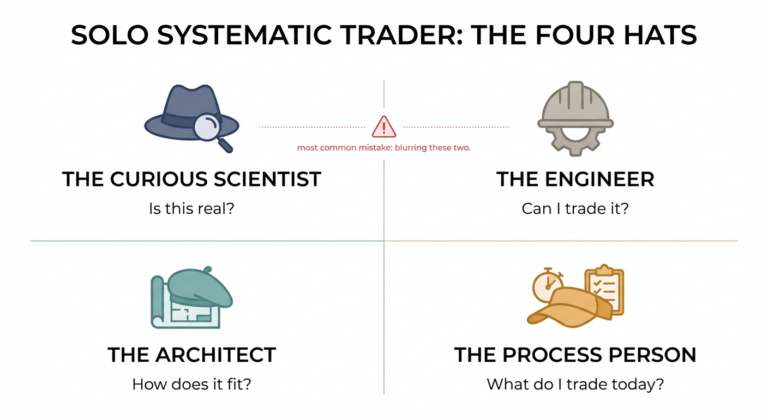

The four hats of the solo trader At a trading firm or fund, the researcher doesn’t run the execution desk.

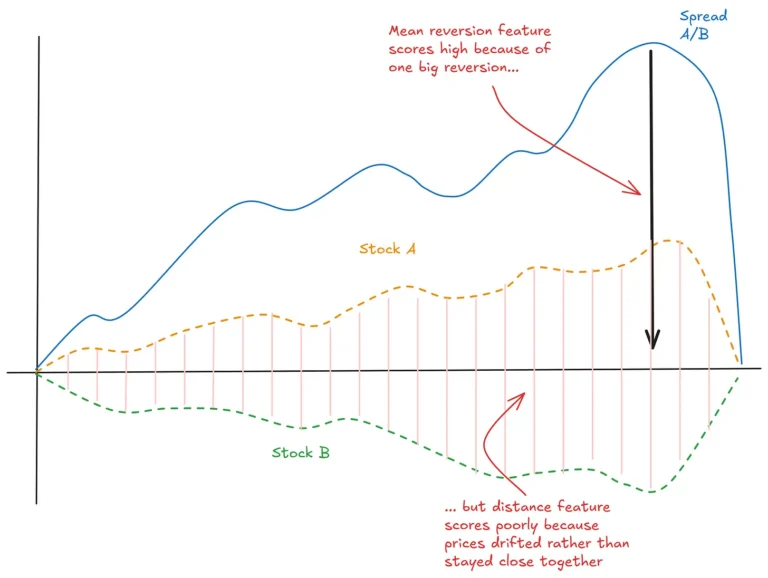

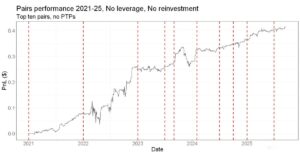

Part 3 of a series on Statistical Arbitrage for Independent Traders Previously: In the last article, we built up a

Previously: A Tale of Two Prices (the core idea of stat arb) Last time we established that stat arb is

Part 1 of a series on Statistical Arbitrage for Independent Traders. It was the age of wisdom, it was the

What’s Past is Prologue Let’s be honest: 2025 was a pretty good year to be a systematic trader. If you

Traders love the illusion of precision. A few bad weeks go by, and you think, “Let’s run a t-test and

When I first got interested in trading, The Whitlams were all over Australian radio, and I was making all the

How do we find edges? First, we must be clear about what constitutes a good idea. It isn’t as simple