It would be great if

machine learning were as simple as just feeding data to an out-of-the box implementation of some learning algorithm, then standing back and admiring the predictive utility of the output. As anyone who has dabbled in this area will confirm, it is never that simple. We have features to engineer and transform (no trivial task – see

here and

here for an exploration with applications for finance), not to mention the vagaries of dealing with data that is

non-Independent and Identically Distributed (non-IID). In my experience, landing on a model that fits the data acceptably at the outset of a modelling exercise is unlikely; a little (or a lot!) of effort is usually required to be expended on tuning and debugging the algorithm to achieve acceptable performance.

In the case of

non-IID time series data, we also have the dilemma of the amount of data to use in the training of a predictive model. Given the

non-stationarity of asset prices, if we use too much data, we run the risk of training our model on data that is no longer relevant. If we use too little data, we run the risk of building an under-fit model. This begs the question:

Is there an ideal amount of data to include in machine learning models for financial prediction? I don’t know, but I doubt the answer is clear cut since we never know when the underlying process is about to undergo significant change. I hypothesise that it makes sense to use the minimum amount of data that leads to acceptable model performance, and testing this is the subject of this post.

How Much Data?

In classical data science, model performance

generally improves as the amount of training data is increased. However, as mentioned above, due to the non-IID nature of the data we use in finance, this happy assumption is not necessarily applicable. My theory is that using too much data (that is, using a training window that extends far into the past) is actually detrimental to model performance.

In order to explore this idea, I decided to build a model based on previous asset returns and measures of volatility. The volatility measure that I used is the 5-period Average True Range (ATR) minus the 20-period ATR normalized over the last 50 periods. The data used is the EUR/USD daily exchange rate sampled at 9:00am GMT between 2006 and 2016.

The model used the previous three values of the returns and volatility series as the input features and the next day’s market direction as the target feature. I trained a simple two-class logistic regression model using R’s

glm function with a time-series cross validation approach. This approach involves training the model on a window of data and predicting the outcome of the next period, then shifting the training window forward in time by one period. The model is then retrained on the new window and the next period’s outcome predicted. This process is repeated along the length of the time series. The cross-validated performance of the model is simply the performance of the next-day predictions using some suitable performance measure. I recorded the profit factor and sharpe ratio of the model’s predictions. I used class probabilities to determine the positions for the next day as follows:

if $P_{up} >= 0.55$, go long at open

if $P_{down} >= 0.55$, go short at open

if $0.45 < P_{up} < 0.55$ (equivalent to $0.45 < P_{down} < 0.55)$, remain flat

where $P_{up}$ and $P_{down}$ are the calculated probabilities for the next day’s market direction to be positive and negative respectively.

Positions were liquidated at the close.

In order to investigate the effects of the size of the data window, I varied its size between 15 and 1,600 days and recorded the cross-validated performance for each case. I also recorded the average in-sample performance on each of the training windows. Slicing up the data so that the various cross-validation samples were consistent across window lengths took some effort, but this wrangling was made simpler using Max Kuhn (to whom I once again tip my hat) and his

caret package.

The results are presented below.

We can see that for the smallest window lengths, the in-sample performance greatly exceeds the cross-validated performance. In other words, when we use very little data, the model fits the training data well, but fails to generalize out of sample. It has a variance problem, which is what we would expect.

Then things get interesting. As we add slightly more data in the form of a longer training window, the in-sample performance decreases, but the cross-validated performance increases, very quickly rising to meet the in-sample performance. In-sample and cross-validated performance is very similar for a range of window lengths between 25 and 75 days. This is an important result, because when the cross-validated performance approximates the in-sample performance, we can conclude that the model is capturing the underlying signal and is therefore likely to generalise well. Encouragingly, this performance is reasonably robust in the approximate window range 25-75 days. If we had only one data point showing reasonable cross-validated performance, I wouldn’t trust that this wasn’t due to randomness. The existence of a region of reasonable performance implies that we may have a degree of confidence in the results.

As we add yet more data to our training window, we can see that the in-sample performance continues to deteriorate, eventually reaching a lower limit, and that the cross-validated performance likewise continues to decline, with a notable exception around 500 days. This suggests that as we increase the training window length, the model develops a bias problem and underfits the data.

These results are perhaps confounded by the fact that the optimal window length may be a characteristic of this particular market and the particular 10-year period used in this experiment. Actually, I feel this is quite likely. I haven’t run this experiment on other markets or time periods yet, but I strongly suspect that each market will exhibit different optimal window lengths, and that these will probably themselves vary with time. Notwithstanding this, it appears that we can at least conclude that in finance, more data is not necessarily better.

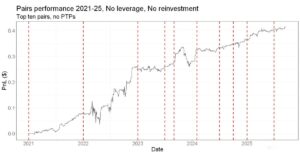

Equity Curves

I know how much algorithmic traders like to see an equity curve, so here is the model performance using a variety of selected window lengths, as well as the buy and hold equity curve of the underlying. Transactions costs are not included.

In this case, the absolute performance is nothing spectacular*. However, it demonstrates the differences in the quality of the predictions obtained using different window lengths for training the models. We can clearly see that more is not necessarily better, at least for this particular period of time.

Performance as a Function of Class Probability Threshold

It is also interesting to investigate how performance varies across the different windows lengths as a function of the class probability threshold used in the trading decisions. Here is a heatmap of the model’s Sharpe ratio for various window lengths and class probability thresholds.

We can see a fairly obvious region of higher Sharpe ratios for lower window lengths and generally increasing class probability threshold. The region of the higher Sharpe ratios for longer window lengths and higher class probabilities (the upper right corner) is actually slightly misleading, since the number of trades taken for these model configurations is vanishingly small. However, we can see that when those trades do occur, they tend to be of a higher quality.

Finally, here are several equity curves for a window length of 30 days and various class probability thresholds.

Conclusions

This post investigated the effects of varying the length of the training window on the performance of a simple logistic regression model for predicting the next-day direction of the EUR/USD exchange rate. Results indicated that more data does not necessarily lead to a better predictive model. In fact, there may be a case for using a relatively small window of training data to force the model to continuously re-learn and adapt to the most immediate market conditions. There appears to be a trade-off to contend with, with very small windows exhibiting vast differences between performance on the training set and performance on out of sample data, and very large windows performing poorly both in-sample and out-of-sample.

While absolute performance of the model was nothing to get excited about, the model used here was a very simple logistic regression classifier and minimal effort was spent on feature engineering. This suggests that the outcomes of this research could potentially be used in conjunction with more sophisticated algorithms and features to build a model with acceptable performance. This will be the subject of future posts.

The axiom

he who has the most data wins is widely applicable in many data science applications. This doesn’t appear to be the case when it comes to building predictive models for the financial markets. Rather, the research presented here suggests that the development and engineering of the model itself may play a far larger role in its out of sample performance. This implies that model performance is more a function of the skill of the developer than on the ability to obtain as much data as possible. I find that to be a very satisfying conclusion.

Source Code and Data

Here’s some source code and data if you are interested in reproducing my results. Warning: it is slightly hacky and takes a long time to run if you store all the in-sample performance data! By default I have commented out that part of the code. Download the data in CSV format

here.

##############################

#### HOW MUCH DATA??? ########

##############################

library(caret)

library(deepnet)

library(foreach)

library(doParallel)

library(e1071)

library(quantmod)

library(reshape2)

# import data

eu <- read.csv("EU_Daily.csv", header = T, stringsAsFactors = F)

# create directional statistics

eu$Direction <- factor(ifelse(eu$Returns>0, "up", "down"))

#Calculate features - lagged return and volatility values

periods <- c(1:5)

Returns <- eu$Returns

Volatility <- eu$atrRegime

Direction <- eu$Direction

lagReturns <- data.frame(lapply(periods, function(x) Lag(Returns, x)))

colnames(lagReturns) <- c('Ret1', 'Ret2', 'Ret3', 'Ret4', 'Ret5')

lagVolatility <- data.frame(lapply(periods, function(x) Lag(Volatility, x)))

colnames(lagVolatility) <- c('Vol1', 'Vol2', 'Vol3', 'Vol4', 'Vol5')

# create data set based on lagged returns and volatility indicators with next day return and direction as targets

dat <- data.frame(Returns, Direction, lagReturns, lagVolatility)

dat <- dat[-c(1:14), ] #remove zeros from initial volatility calcs

dat <- na.omit(dat)

# preserve out of sample data

# Train <- dat[1:(nrow(dat)-500), ]

# Test <- dat[-(1:(nrow(dat)-500)), ]

Train <- dat # for using all data in training set

# logistic regression models --------------------------------------------------------------

direction <- 2 # column number of direction variable

features <- c(3,4,5,8,9,10) #feature columns

returns <- 1 # returns column

windows <- c(15, 20, 25, 30, 40, 50, 75, 100, 125, 150, 200, 250, 300, 350, 400, 450, 500, 600, 700, 800, 900, 1000, 1100, 1200, 1300, 1400, 1500, 1600)

modellist.lr <- list()

PFTrain <- vector()

SharpeTrain <- vector()

j <- 1

for (i in windows) {

timecontrol <- trainControl(method = 'timeslice', initialWindow = i, horizon = 1, classProbs = TRUE,

returnResamp = 'final', fixedWindow = TRUE, savePredictions = 'final')

cl <- makeCluster(8)

registerDoParallel(cl)

set.seed(503)

modellist.lr[[j]] <- train(Train[, features], Train[, direction],

method = 'glm', family = 'binomial',

trControl = timecontrol)

#### comment out lines 63-65 and uncomment lines 67-93 to run in-sample performance module

j <- j+1

print(i)

stopCluster(cl) }

# ctrl <- trainControl(method='none', classProbs = TRUE)

# tradesCP <- list()

# pfCP <- vector()

# srCP <- vector()

# indexes <- modellist.lr[[j]]$control$index

# for(k in c(1:length(modellist.lr[[j]]$control$index)) )

# {

# model <- train(Train[indexes[[k]], features], Train[indexes[[k]], direction],

# method = 'glm', family = 'binomial',

# trControl = ctrl)

#

# predsCP <- predict(model, Train[indexes[[k]], features], type = 'prob')

# th <- 0.5

#

# tradesCP[[k]] <- ifelse(predsCP$up > th, Train[indexes[[k]], returns], ifelse(predsCP$down > th, Train[indexes[[k]], returns], 0))

# pfCP[k] <- sum(tradesCP[[k]][tradesCP[[k]] > 0])/abs(sum(tradesCP[[k]][tradesCP[[k]] < 0]))

# srCP[k] <- sqrt(252)*mean(tradesCP[[k]])/sd(tradesCP[[k]])

#

# cat("\niteration:",k, "PF:",round(pfCP[k], digits = 2), "SR:",round(srCP[k], digits = 2))

#

# }

# PFTrain[j] <- mean(pfCP)

# SharpeTrain[j] <- mean(srCP)

# stopCluster(cl)

# j <- j+1

# cat("\n",i)

# }

# resampled performance - use class probabilities as a trade threshold

thresholds <- seq(0.50, 0.65, 0.005)

windowIndex <- c(1:length(windows))

pf.cv.th <- matrix(nrow = length(windowIndex), ncol = length(thresholds), dimnames = list(c(as.character(windows)), c(as.character(thresholds)))) # adjust matrix dimensions based on loop size

sr.cv.th <- matrix(nrow = length(windowIndex), ncol = length(thresholds), dimnames = list(c(as.character(windows)), c(as.character(thresholds))))

Trades.DF <- matrix(nrow = length((max(windows)+1):nrow(Train)), ncol = length(windowIndex) , dimnames = list(c(), c(as.character(windows))))

for(i in windowIndex)

{

commonData <- c((max(windows)+1):nrow(Train))

j <- 1

for(th in thresholds)

{

trades <- ifelse(modellist.lr[[i]]$pred$up[(length(modellist.lr[[i]]$pred$up)-length(commonData)+1):length(modellist.lr[[i]]$pred$up)] > th, Train$Returns[commonData], ifelse(modellist.lr[[i]]$pred$down[(length(modellist.lr[[i]]$pred$down)-length(commonData)+1):length(modellist.lr[[i]]$pred$down)] > th, -Train$Returns[commonData], 0))

plot(cumsum(trades), type = 'l', col = 'blue', xlab = 'day', ylab = 'cumP', main = paste0('Window: ',windows[i], ' Cl.Prob Thresh: ',th), ylim = c(-0.5, 1.0))

lines(cumsum(Train$Returns[commonData]), col = 'red')

pf.cv.th[i, j] <- sum(trades[trades>0])/abs(sum(trades[trades<0]))

sr.cv.th[i, j] <- sqrt(252)*mean(trades)/sd(trades)

if(th == 0.525) Trades.DF[, i] <- trades

j <- j+1

}

}

#### plot training vs cv performance - class probs

th <- 11 # threshold index

plot(PFTrain, pf.cv.th[, th])

PF.Compare <- data.frame(windows, PFTrain, pf.cv.th[, th])

colnames(PF.Compare) <- c('window', 'IS', 'CV')

SR.Compare <- data.frame(windows, SharpeTrain, sr.cv.th[, th])

colnames(SR.Compare) <- c('window', 'IS', 'CV')

# base R plots

plot(windows, SharpeTrain, type = 'l', ylim = c(-0.9, 1.5), col = 'blue')

lines(windows, sr.cv.th[, th], col = 'red')

plot(windows, PFTrain, type = 'l', ylim = c(0.8, 1.5), col = 'blue')

lines(windows, pf.cv.th[, th], col = 'red')

# nice ggplots

pfMolten <- melt(PF.Compare, id = c('window'))

srMolten <- melt(SR.Compare, id = c('window'))

pfPlot <- ggplot(data=pfMolten,

aes(x=window, y=value, colour=variable)) +

geom_line(size = 0.75) +

scale_colour_manual(values = c("steelblue3","tomato3")) +

theme(legend.title=element_blank()) +

ylab('Profit Factor') +

ggtitle("In-Sample and Cross-Validated Performance")

sharpePlot <- ggplot(data=srMolten,

aes(x=window, y=value, colour=variable)) +

geom_line(size = 1.25) +

scale_colour_manual(values = c("steelblue3","tomato3")) +

theme(legend.title=element_blank()) +

ylab('Sharpe Ratio') +

ggtitle("In-Sample and Cross-Validated Performance")

# heatmap plots of performance and thresholds

srHeat <- melt(sr.cv.th)

colnames(srHeat) <- c('window', 'threshold', 'sharpe')

srHeat$window <- factor(srHeat$window, levels = c(windows))

pfHeat <- melt(pf.cv.th[-c(15:22), ])

colnames(pfHeat) <- c('window', 'threshold', 'pf')

pfHeat$window <- factor(pfHeat$window, levels = c(windows))

sharpeHeatMap <- ggplot(data=srHeat, aes(x = window, y = threshold)) +

geom_tile(aes(fill = sharpe), colour = "white") +

scale_fill_gradient(low = "#fff7bc", high = "#e6550d", name = 'Sharpe Ratio') +

scale_x_discrete(breaks = windows[seq(2,28,2)]) +

xlab('Window Length (not to scale)') +

ylab('Class Probability Threshold') +

theme(axis.line = element_line(colour = "black"),

panel.grid.major = element_blank(),

panel.grid.minor = element_blank(),

panel.border = element_blank(),

panel.background = element_blank())

pfHeatMap <- ggplot(data=pfHeat, aes(x = window, y = threshold)) +

geom_tile(aes(fill = pf), colour = "white") +

scale_fill_gradient(low = "#e5f5f9", high = "#2ca25f", name = 'Profit Factor') +

xlab('Window Length (not to scale)') +

ylab('Class Probability Threshold') +

theme(axis.line = element_line(colour = "black"),

panel.grid.major = element_blank(),

panel.grid.minor = element_blank(),

panel.border = element_blank(),

panel.background = element_blank())

# Equity curves

#colnames(Trades.DF) <- as.character(windows)

Trades.DF <- as.data.frame(Trades.DF)

Equity <- cumsum((Trades.DF[, as.character(c(15, 30, 500, 1000, 1500))]))

Equity$Underlying <- cumsum(Train$Returns[commonData])

Equity$Index <- c(1:nrow(Equity))

EquityMolten <- melt(Equity, id = 'Index')

equityPlot <- ggplot(data=EquityMolten, aes(x=Index, y=value, colour=variable)) +

geom_line(size = 0.6) +

scale_color_manual(name = c('Window\nLength'), values = c('darkorchid3', 'dodgerblue3', 'darkolivegreen4', 'darkred', 'goldenrod', 'grey35')) +

ylab('Return') +

xlab('Day') +

ggtitle("Returns")

## Equity curves for a single window length

thresholds <- seq(0.50, 0.95, 0.01)

Index <- which(windows == 30)

Trades.win <- matrix(nrow = length((max(windows)+1):nrow(Train)), ncol = length(thresholds) , dimnames = list(c(), c(as.character(thresholds))))

commonData <- c((max(windows)+1):nrow(Train))

j <- 1

for(th in thresholds)

{

trades <- ifelse(modellist.lr[[Index]]$pred$up[(length(modellist.lr[[Index]]$pred$up)-length(commonData)+1):length(modellist.lr[[Index]]$pred$up)] > th, Train$Returns[commonData], ifelse(modellist.lr[[Index]]$pred$down[(length(modellist.lr[[Index]]$pred$down)-length(commonData)+1):length(modellist.lr[[Index]]$pred$down)] > th, -Train$Returns[commonData], 0))

Trades.win[, j] <- trades

j <- j+1

}

Trades.win.DF <- as.data.frame(Trades.win)

Equity.win <- cumsum(Trades.win.DF[, seq(1, 31, 5)])

Equity.win$Underlying <- cumsum(Train$Returns[commonData])

Equity.win$Index <- c(1:nrow(Equity))

Equity.winMolten <- melt(Equity.win, id = 'Index')

singleWinPlot <- ggplot(data=Equity.winMolten,

aes(x=Index, y=value, colour=variable)) +

geom_line(size = 0.6) +

scale_colour_brewer(palette = 'Dark2', name = c('Class\nProbability\nThreshold')) +

ylab('Return') +

xlab('Day') +

ggtitle("Returns")

###################################

*Of course, building a production trading model is not the point of the exercise. Apologies for pointing this out; I know most of you already understand this, but I invariably get emails after every post from people questioning the performance of the ‘trading algorithms’ I post on my blog. Just to be clear, I am not posting trading algorithms!! I am sharing my research. Performance on market data, particularly relative performance, is a quick and easy way to interpret the results of this research. I don’t intend for anyone (myself included) to use the simple logistic regression model presented here in a production environment. However, I do intend to use the concepts presented in this post to improve my existing models or build entirely new ones. There is more than enough information in this post for you to do the same, if you so desired.

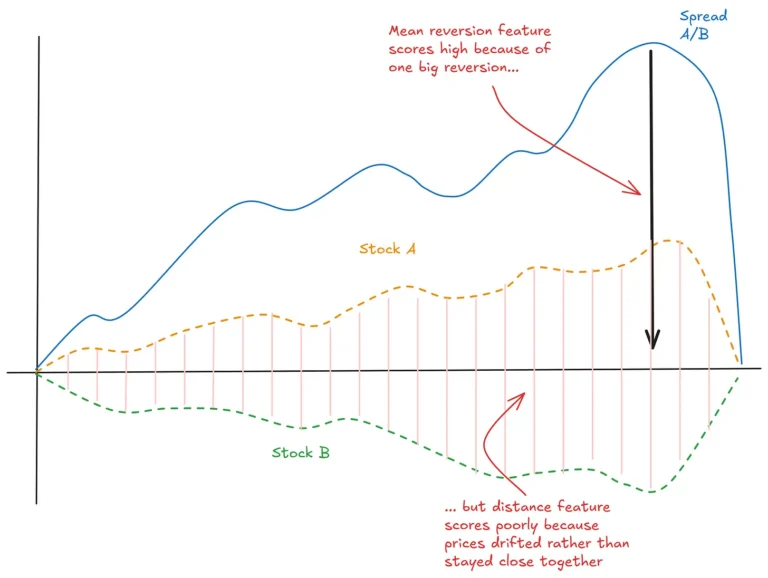

We can see that for the smallest window lengths, the in-sample performance greatly exceeds the cross-validated performance. In other words, when we use very little data, the model fits the training data well, but fails to generalize out of sample. It has a variance problem, which is what we would expect.

Then things get interesting. As we add slightly more data in the form of a longer training window, the in-sample performance decreases, but the cross-validated performance increases, very quickly rising to meet the in-sample performance. In-sample and cross-validated performance is very similar for a range of window lengths between 25 and 75 days. This is an important result, because when the cross-validated performance approximates the in-sample performance, we can conclude that the model is capturing the underlying signal and is therefore likely to generalise well. Encouragingly, this performance is reasonably robust in the approximate window range 25-75 days. If we had only one data point showing reasonable cross-validated performance, I wouldn’t trust that this wasn’t due to randomness. The existence of a region of reasonable performance implies that we may have a degree of confidence in the results.

As we add yet more data to our training window, we can see that the in-sample performance continues to deteriorate, eventually reaching a lower limit, and that the cross-validated performance likewise continues to decline, with a notable exception around 500 days. This suggests that as we increase the training window length, the model develops a bias problem and underfits the data.

These results are perhaps confounded by the fact that the optimal window length may be a characteristic of this particular market and the particular 10-year period used in this experiment. Actually, I feel this is quite likely. I haven’t run this experiment on other markets or time periods yet, but I strongly suspect that each market will exhibit different optimal window lengths, and that these will probably themselves vary with time. Notwithstanding this, it appears that we can at least conclude that in finance, more data is not necessarily better.

We can see that for the smallest window lengths, the in-sample performance greatly exceeds the cross-validated performance. In other words, when we use very little data, the model fits the training data well, but fails to generalize out of sample. It has a variance problem, which is what we would expect.

Then things get interesting. As we add slightly more data in the form of a longer training window, the in-sample performance decreases, but the cross-validated performance increases, very quickly rising to meet the in-sample performance. In-sample and cross-validated performance is very similar for a range of window lengths between 25 and 75 days. This is an important result, because when the cross-validated performance approximates the in-sample performance, we can conclude that the model is capturing the underlying signal and is therefore likely to generalise well. Encouragingly, this performance is reasonably robust in the approximate window range 25-75 days. If we had only one data point showing reasonable cross-validated performance, I wouldn’t trust that this wasn’t due to randomness. The existence of a region of reasonable performance implies that we may have a degree of confidence in the results.

As we add yet more data to our training window, we can see that the in-sample performance continues to deteriorate, eventually reaching a lower limit, and that the cross-validated performance likewise continues to decline, with a notable exception around 500 days. This suggests that as we increase the training window length, the model develops a bias problem and underfits the data.

These results are perhaps confounded by the fact that the optimal window length may be a characteristic of this particular market and the particular 10-year period used in this experiment. Actually, I feel this is quite likely. I haven’t run this experiment on other markets or time periods yet, but I strongly suspect that each market will exhibit different optimal window lengths, and that these will probably themselves vary with time. Notwithstanding this, it appears that we can at least conclude that in finance, more data is not necessarily better.

In this case, the absolute performance is nothing spectacular*. However, it demonstrates the differences in the quality of the predictions obtained using different window lengths for training the models. We can clearly see that more is not necessarily better, at least for this particular period of time.

In this case, the absolute performance is nothing spectacular*. However, it demonstrates the differences in the quality of the predictions obtained using different window lengths for training the models. We can clearly see that more is not necessarily better, at least for this particular period of time.

We can see a fairly obvious region of higher Sharpe ratios for lower window lengths and generally increasing class probability threshold. The region of the higher Sharpe ratios for longer window lengths and higher class probabilities (the upper right corner) is actually slightly misleading, since the number of trades taken for these model configurations is vanishingly small. However, we can see that when those trades do occur, they tend to be of a higher quality.

Finally, here are several equity curves for a window length of 30 days and various class probability thresholds.

We can see a fairly obvious region of higher Sharpe ratios for lower window lengths and generally increasing class probability threshold. The region of the higher Sharpe ratios for longer window lengths and higher class probabilities (the upper right corner) is actually slightly misleading, since the number of trades taken for these model configurations is vanishingly small. However, we can see that when those trades do occur, they tend to be of a higher quality.

Finally, here are several equity curves for a window length of 30 days and various class probability thresholds.

This very much agrees with my own observations. I think that shorter time spans probably capture regime changes more cleanly rather than getting confused by a variety of behaviours. This may be different if more complex models such as deep neural networks are used but they will probably present other problems. Anyway, many thanks for the well-written article.

Thanks for the reply, Tom. I agree with both your points. I am still very interested in applying deep neural nets to algo trading, particularly to streamline the feature engineering phase. But they do present their own unique challenges in their practical application. There is certainly a case for using simpler models, particularly if you can ensemble them together in a clever way.

I think that too… but in many cases, a short time span does not give enough information to your statistical tools: one can verify this point using Random Matrix Theory, for example.

Besides, for statistical estimators/algorithms you should provide them the ‘minimum’ amount of data such that they can work well, i.e. can reach a minimum accuracy (related to the convergence rate of the statistical estimate toward the ‘true’ value). If this minimum amount of data is too much for the financial applications, then it is hopeless…

See for example Clustering Financial Time Series: How Long Is Enough? https://www.ijcai.org/Proceedings/16/Papers/367.pdf

Hey Mic, you raise a good point – it is certainly something of a balancing act between too much data and not enough to reach even a minimum accuracy. My personal experience suggests that it isn’t as black and white as your comment suggests – for example, a particular estimator may be accurate enough given the right amount of data for a certain period in time. Utilising this information in a practical way is quite tricky, but I wouldn’t call it hopeless.

Thanks for the link too. Will be sure to check it out.

RM, Thanks very much for your detailed article. I’ve been trying to reproduce on my end but am getting hung up in the structure of your EU_daily.csv file and the definition of your volatility. You describe it above as “The volatility measure that I used is the 5-period Average True Range (ATR) minus the 20-period ATR normalized over the last 50 periods.”. I’ve implemented by using the ATR package within ‘TTR’ library for a 5 periods and 20 periods. I divide this difference by the 50 day running min of the 50 day difference. I’m not sure if this is how you meant ‘normalized over the last 50 periods’.

Any help would be great! Thanks again for your work.

Correction…

I divide this difference by the 50 day running min of the difference.

Hey Sven, thanks for reading.

I scaled my volatility measure over 50 days using the following formula:

$2 * cdf(0.5*(x_i-x_{median})/(x_{P75}-x_{P25}))-1$where $x$ is the value of the raw volatility measure, the median and inter-quartile range of $x$ are calculated over the last 50 periods and the subscript $i$ denotes the current value.

There is a very useful compression function built into Zorro that performs this calculation which I used and then exported the data for importing into R.

This was fairly quick and dirty, so you might get better results using other volatility measures.

Cheers

[latexpage]

You’re welcome, thanks again for the interesting post.

Understood on the function above. You’re right, there are countless other more complex (and simpler) ways to measure volatility but this is more than sufficient to illustrate the point.

In line 114, you reference a threshold of 52.5%, I assume that this was simply the most recent choice at the time you posted the source code, I couldn’t tell from the included charts above whether this was the what you used or you used 55% as referenced in the formulas.

I’ve more or less replicated your work (thanks for the help doing so!) and found a similar pattern regarding less data yielding better results although my equity curve does not look quite as stable. Furthermore, I’m using 5:00PM EST as the snapshot for daily rates… a follow on area for research could be testing for stability when predicting different times of the day.

Hi Sven

You’ve got a great eye for detail! Well spotted. I used 55% in the charts shown.

Glad to hear you found a similar pattern regarding the shorter window lengths. I have found that when sampling foreign exchange data at daily frequency, the sampling time tends to have a significant impact for many currency pairs, so I am not surprised that your equity curves look different to mine. Check out this paper for a possible explanation: Currency Returns in Different Time Zones. The results in this paper generally agree with what I’ve experienced in the FX markets.

Thanks for your interesting post.

You find that a short training window works better, but you are using relatively few inputs. If you had more predictors, maybe a larger window would work better.

Your plot of cross-validation performance vs. window length could be smoothed by something like lowess, since the many relative minima and maxima are likely due to noise.

Hi Vivek

It is possible that a larger window would work better with more predictors. Having more predictors essentially leads to a more complex model, which may help overcome the bias problem developed by my model for larger window lengths. If you feel like researching this and sharing the results, I’d love to hear from you.

Indeed, a lowess smoother would reduce the impact of those noisy minima and maxima in the CV-window plot. Thanks for the tip!

Hi and thanks for sharing your research.

My own research also came to the conclusion that shorter windows were more suitable. Especially, extreme events (for instance 2007-2008) had huge impact on the outcome. However, throwing away data is rather counter intuitive. As factors are dynamic, it of course makes sense to shorten the estimation window to only capture the most relevant information. Nevertheless, as quant we hypothesize that the future behavior of the market will be somewhat self-similar. So, although old data is outdated, it should contain some (useful) information which could reduce uncertainty about future outcome.

Recently, I quickly thought about another option which was to train once on a short window and then shrink the result toward the longer window (or alternatively use an ensemble approche and combine all windows). We could set the shrinkage factor either by minimizing a cost function or simply use an exponential smoothing factor. In any case, the diversification effect should work and we can hope to retain some benefit of old information.

Another option would be to model explicitly the dynamic of the feature. In portfolio optimization with factors, the betas are often modeled as simple mean-reverting process. So including a lagged term of the features, in order to capture their dynamic, could help, but I would be more careful about overfitting in this case.

Digression: For the threshold choice, sizing position according to the forecast confidence (after some rescaling) generally works fine and could be interesting to investigate further.

I’ll try to investigate these options should I have time and share the results if anyone is interested.

Best, L

Hey Laurent

Thanks so much for your thoughtful comments. I really agree about being cautious about throwing away old data. Intuitively, one would think there would have to be something useful in there, even if it obscured by the passage of time. I really like the idea of ensembling a bunch of different windows and maybe using a majority as the basis for trading decisions. Maybe weighting the more recent data windows would help too.

I’m super-interested in hearing about any results you are willing to share. I’ll also investigate the ensembling idea as the subject of a future blog post.

Thanks again!

Kris

Hi Kris,

Just wondering if you could give us your csv file so that we can play around with it as well. I realise I can download the exchange rates myself, but not sure how to calculate atrRegime metric.

Cheers,

Sachin

Hey Sachin

Nice to hear from you mate. Sorry for the slow reply. Yep sure thing, I’ll provide some data sets in csv format that you can play around with. I’ll generate files for various daily exchange rates and close times (which I think is a really interesting feature in itself). The data set I used in my analysis was quite arbitrary – daily EUR/USD sampled at 9:00 GMT. Give me a couple of days and I’ll upload them to the site.

Cheers

Kris

Hey Sachin,

Took me a while, but I’ve uploaded the csv file I used in the analysis, as well as the equivalent file for the EUR/USD exchange rate.

Cheers

Kris

Yes but Where? I can’t see it? Error?

It’s under the heading “Source Code and Data”

Hi. Could you provide csv file so that we know the way the atr regime calculation is done? I’m struggling to run the R-code given that atr regime produces NA values

Hi,

According to machine learning principles changing the training size just change the variance of the output i.e. bigger training size decreasing this variance.

For my system which runs on 1min charts for 8 symbols i use 20k training size however i tested this in range 2k-100k and it was behaving exactly like principle are saying decreasing/increasing variance of final result. For your test as it is done on daily chart and does not generate a lot of trades I have a feeling that the results obtained can be very much contaminated by output variance. Additionally this type of setting

if P_{up} >= 0.55, go long at open

if P_{down} >= 0.55, go short at open

if 0.45 < P_{up} < 0.55 (equivalent to 0.45 < P_{down} < 0.55), remain flat

where P_{up} and P_{down} are the calculated probabilities for the next day’s market direction to be positive and negative respectively.

Positions were liquidated at the close.

just introduces additional noise. From where those values coming from ??

Just my opinion.

Krzysztof

Hello Krysztof

Thanks for posting your thoughts and opinions. You raise some good points.

The thing with machine learning is that there is only one thing worse than training an algorithm on too little data, and that’s training it on irrelevant data. The output variance you mentioned is a property of any machine learning model, and controlling the training window is one way to control that variance. As any trader will tell you, this is largely a game of trade-offs that allow you to find a sweet spot where you can operate profitably, rather than an exercise in theoretical modelling principles. The advice I keep coming back to is to focus on the practical and try not to get too enamoured with how things “should” work from an academic or theoretical standpoint. I’m not saying forget about theory, but I am saying that there are plenty of textbooks about machine learning that won’t help you pull money out of the markets.

Regarding the class probability threshold filter: I agree that we should generally reduce our parameters to the extent possible, but this one is useful from a number of different perspectives. For starters, if your model really is useful, then its higher probability predictions should be more accurate. I use this property to test for the robustness of a machine learning model. Also, when this is true, you can tune your strategy according to your personal preferences for trade frequency, if you have any. But hey, there is no one “right” way to do this, and I can also understand why some people would shy away from additional parameters. Each to their own.

One final point – I don’t post trading systems here that I expect anyone to actually trade (I believe in sharing, but I can’t post my systems for the whole world to see). When I do post a system, it is simply as an aid to help explore a particular hypothesis or idea. I’m not sure if you were comparing the strategy in this post to the one you referred to, but I just wanted to clarify in case there was a misunderstanding about the intent of the things I post.